The Dawn of 4D Imaging: How a Large-Scale Coherent Sensor is Redefining Machine Vision

A groundbreaking 4D imaging sensor, detailed in a recent Nature publication, represents a significant leap forward in machine vision technology. By integrating over 600,000 photonic components on a single chip, this sensor captures not only three-dimensional spatial data but also radial velocity as a fourth dimension. This innovation promises to revolutionize fields from autonomous vehicles to augmented reality by providing machines with a more detailed and dynamic understanding of their environment, akin to a CMOS camera for the multidimensional world.

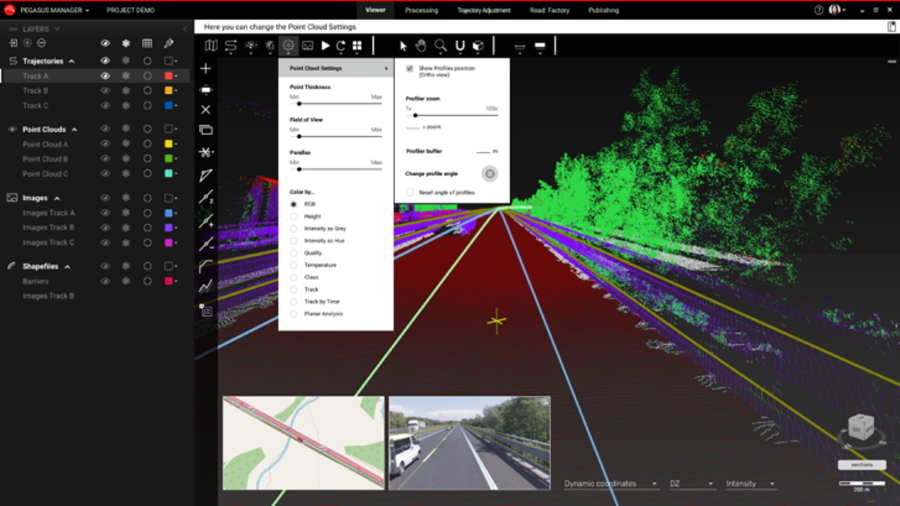

The evolution of machine perception is taking a monumental leap forward with the development of large-scale coherent 4D imaging sensors. As detailed in a recent Nature publication, this technology integrates the principles of frequency-modulated continuous-wave (FMCW) light detection and ranging (LiDAR) into a focal plane array (FPA) sensor, enabling machines to perceive the world in four dimensions: three spatial dimensions plus radial velocity. This breakthrough addresses a critical need for scalable, high-performance 3D mapping in dynamic environments, which is essential for safe human-machine interaction and the advancement of automation across numerous industries.

Understanding the 4D Imaging Architecture

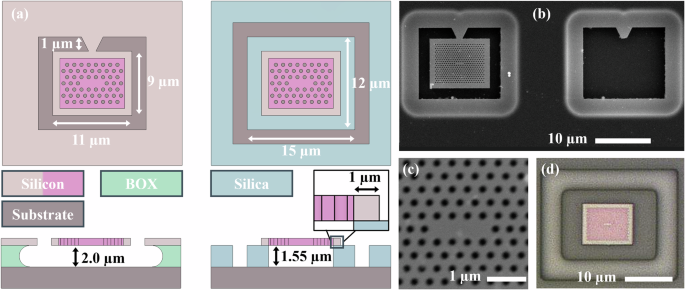

At its core, the sensor is a 352 × 176-pixel 2D FMCW LiDAR focal plane array. This represents a fivefold increase in pixel count compared to previous demonstrations, comprising over 0.6 million photonic components monolithically integrated on-chip with their associated electronics. The system functions like a CMOS camera for multidimensional imaging, where the field of view and range are determined by the selected optical lens system, offering remarkable flexibility.

The sensor's architecture is built around a monostatic pixel design. This means the same grating couplers that transmit the frequency-modulated light also collect the backscattered light from the target. This configuration, combined with coherent heterodyne detection using balanced germanium photodetectors within each pixel, inherently prevents optical cross-coupling and eliminates the need for complex alignment of separate inbound and outbound optical paths.

The Role of FMCW LiDAR and Coherent Detection

The sensor employs a frequency-modulated continuous-wave (FMCW) detection scheme. A laser's optical frequency is linearly modulated (chirped), and the time delay of the light reflected from a target creates a beat frequency when mixed with a local oscillator. This beat frequency is directly proportional to the target's distance. By using both up-chirps and down-chirps, the system can also measure the target's radial velocity—the fourth dimension.

Coherent detection offers several key advantages. It makes the system immune to external optical interference from sources like sunlight or other LiDARs, a significant challenge for traditional direct detection LiDAR. Furthermore, it allows for much lower emitted optical power to achieve the same signal-to-noise ratio, enhancing eye safety—a paramount concern for consumer and automotive applications.

Performance and Capabilities of the Imaging System

The demonstrated sensor achieves impressive performance metrics that bridge the gap between research and commercial application. It has shown a radial measurement range of up to 65 meters on targets with an estimated 30% reflectivity. With an angular resolution of 0.06° and the ability to operate at frame rates from 3 to 15 frames per second, it provides dense, real-time point clouds of dynamic scenes.

One of the most notable achievements is its energy efficiency. The system uses as little as 46 nJ of energy per point, with an average on-target optical power of just 178 µW per pixel. This low power operation is crucial for mobile and embedded applications. The research indicates that with simple design modifications to increase local oscillator power, the system could operate in the shot-noise-limited regime, improving the signal-to-noise ratio by approximately 5.6 dB and potentially extending the detection range beyond 200 meters.

Applications and Future Impact

The implications of this technology are vast. By providing a compact, low-cost, and scalable 4D imaging solution, it acts as a foundational enabler for the next generation of autonomous systems. In autonomous vehicles, the simultaneous measurement of distance and velocity for every pixel allows for more accurate trajectory prediction of pedestrians, cyclists, and other vehicles, even in poor lighting conditions.

Beyond automotive, this sensor technology is poised to impact robotics, where precise navigation and manipulation in unstructured environments are required; augmented and virtual reality, for more immersive and interactive experiences; and industrial automation, for quality control and logistics. Its eye-safe operation and camera-like form factor also open doors for applications in consumer electronics and smart infrastructure.

The Path to Ubiquitous Adoption

The research highlights that this demonstration brings sensor resolution within the requirements of most applications. The monolithic integration of all photonic and electronic components on a single chip using a standard 300-mm silicon photonics foundry process is the key to achieving the cost structure necessary for widespread adoption. Future work will focus on improving the signal-to-noise ratio, increasing optical power delivery through advanced Si-SiN waveguide architectures, and refining the pixel layout to eliminate gaps in the far-field pattern.

As noted in the study, this coherent 4D imaging FPA sensor "offers a compact, low-cost, scalable and adaptable 4D imaging solution, effectively proposing a CMOS camera equivalent for the multidimensional imaging of the world." This marks a pivotal step toward machines that can see and understand the world with a depth and dynamism that rivals, and in some aspects surpasses, human perception.