AI, Disinformation, and Geopolitics: The Tech Industry's Role in Modern Conflict

The escalating conflict between the US and Iran has revealed how deeply intertwined technology has become with modern warfare. From AI companies negotiating military contracts to prediction markets betting on geopolitical outcomes, and social media platforms becoming cesspools of disinformation, the tech industry finds itself at the center of international conflict. This article examines how artificial intelligence, disinformation campaigns, and financial speculation are reshaping warfare, politics, and global stability in real-time.

The intersection of technology and geopolitics has reached a critical inflection point as the ongoing conflict between the United States and Iran intensifies. What began as military strikes has rapidly evolved into a multi-dimensional conflict where artificial intelligence companies, social media platforms, and prediction markets play increasingly significant roles. This convergence of technology and warfare represents a fundamental shift in how international conflicts unfold, with implications for global stability, information integrity, and ethical boundaries in technological development.

The Disinformation Battlefield: X's Role in Modern Conflict

Social media platforms, particularly X (formerly Twitter), have become primary battlegrounds for information warfare during the US-Iran conflict. According to analysis from WIRED's reporting, the platform has been flooded with misleading claims about the locations and scale of attacks, with some posts racking up millions of views. The disinformation ranges from AI-generated images to video game scenes being passed off as real footage, creating a chaotic information environment that undermines public understanding of the conflict.

The situation has been exacerbated by X's decision to eliminate most of its public safety team and content moderators, relying instead on community notes that often appear too late to counteract viral misinformation. As noted in the WIRED podcast analysis, "by the time a community note gets on there, it's already been viewed 4 million times." This represents the culmination of years of platform decisions that have made X increasingly hostile to journalists and fact-checkers while incentivizing engagement through controversial content.

AI Companies and Military Contracts: Ethical Boundaries Tested

The Pentagon's Push for AI Integration

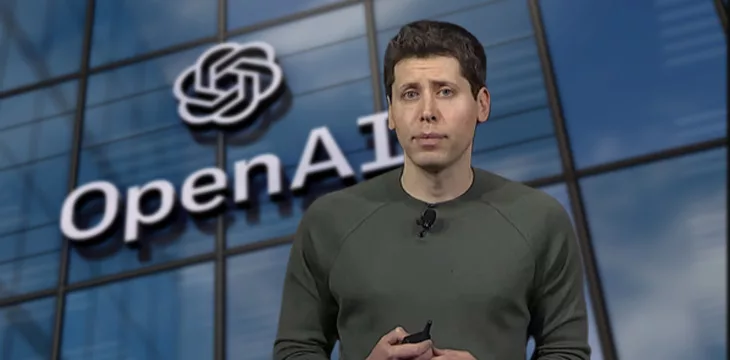

The conflict has unfolded against the backdrop of significant negotiations between major AI companies and the US Department of Defense. Just days before the strikes on Iran, OpenAI struck a deal with the Pentagon, while Anthropic found itself in contentious discussions with the same department. Anthropic's insistence on conditions including a ban on surveillance of American citizens and restrictions on building fully autonomous weapons created friction with military officials who view such technology as essential to national security.

As reported in the WIRED analysis, the Pentagon's perspective is clear: "They very much view this technology as theirs. The idea of this ownership, the idea that, no, this was made in America, you made it for you, but it's for us." This tension highlights the growing divide between tech companies' ethical frameworks and military imperatives during times of conflict.

Talent Wars and Ethical Positioning

The military negotiations have significant implications for the ongoing talent war among AI laboratories. Anthropic has positioned itself as the more ethically-conscious alternative to OpenAI, particularly after Sam Altman's public acknowledgment that OpenAI's Pentagon deal was rushed. As discussed in the WIRED podcast, this positioning matters significantly for recruiting top research talent, many of whom come from academic backgrounds and maintain idealistic views about their work's applications.

The reality, however, is more complex. Despite Anthropic's public stance, their products were used extensively in the initial strikes on Iran, with only a six-month phase-out period. This discrepancy between public positioning and practical implementation raises questions about how effectively AI companies can maintain ethical boundaries while pursuing government contracts.

Prediction Markets: Gamifying Geopolitical Conflict

The Ethics of Betting on Human Lives

Prediction markets like Polymarket and Kalshi have emerged as controversial players in the conflict, with millions of dollars being wagered on geopolitical outcomes. One of the top bets on Polymarket asks whether "the Iran regime will fall by June 30th," with approximately $7 million in total bets on that market alone. More disturbingly, a $54 million market focused on the fate of Iran's supreme leader raised ethical questions about betting on human lives under the guise of political outcomes.

As noted in the WIRED analysis, these markets often find "cute ways around" prohibitions on betting on deaths, creating what one commentator described as a "gamified grotesque" version of conflict. The platforms have taken minimal enforcement actions against insider trading, with only a few account suspensions despite evidence of employees at major tech companies using confidential information to profit from prediction markets.

Political Connections and Regulatory Challenges

The prediction market industry has developed significant political connections, particularly with the Trump family. Donald Trump Jr. serves as an advisor to both Kalshi and Polymarket, and his venture capital firm has invested in Polymarket. The Trump family is also planning their own prediction market offering called Truth Predict through their Truth Social platform. These connections have created a regulatory environment where enforcement is minimal, despite growing concerns about insider trading and the ethical implications of betting on geopolitical events.

The situation becomes particularly concerning when considering that individuals with access to sensitive information could potentially profit from prediction markets. As discussed in the WIRED podcast, "even just the perception that policy could be made based off of looking to score a quick buck, that's damaging in and of itself."

Media Consolidation and Political Influence

The potential acquisition of Warner Bros. by Paramount Skydance represents another dimension of how business and politics intersect in the current geopolitical climate. The $110 billion deal would give Larry and David Ellison control over major media properties including CNN, HBO, DC Comics, and numerous cable networks. This consolidation comes amid concerns about political influence, particularly given the Ellisons' connections and the Trump administration's reported pressure on alternative bidders like Netflix.

As analyzed in the WIRED discussion, journalists at affected news organizations are "freaking out" about potential ideological purges and job losses. The situation reflects broader trends of media consolidation and political influence that could reshape how conflicts are reported and understood by the public.

Future Implications and Ethical Considerations

The convergence of technology and conflict raises profound questions about the future of warfare, information integrity, and ethical boundaries. The current situation suggests several troubling trends that could intensify in coming years:

- Increasing integration of AI in military operations with potentially reduced human oversight

- Continued erosion of information integrity on social platforms during crises

- Expansion of prediction markets into increasingly sensitive areas with minimal regulation

- Greater political influence over media and technology companies during times of conflict

As the conflict between the US and Iran continues to evolve, the role of technology companies will likely become even more significant. The ethical dilemmas faced by AI researchers, the information challenges created by social media platforms, and the regulatory gaps surrounding prediction markets all point toward a future where technology and conflict are increasingly inseparable. Addressing these challenges will require coordinated efforts between governments, technology companies, and civil society to establish clear ethical boundaries and regulatory frameworks.