The End of Discrete Chip Purchases: Why AI Companies Now Need Complete Computing Ecosystems

The era of tech giants buying individual chips for specific functions is ending as AI companies increasingly require comprehensive computing ecosystems. Nvidia's recent multi-billion dollar deal with Meta exemplifies this shift, where companies now need integrated solutions combining GPUs, CPUs, and specialized hardware. This transformation reflects the evolving demands of agentic AI and inference computing, moving beyond the traditional focus on GPU-intensive training. As AI applications become more complex and diverse, companies are seeking complete hardware stacks that can handle everything from data processing to real-time inference, fundamentally changing how computing power is purchased and deployed in the AI industry.

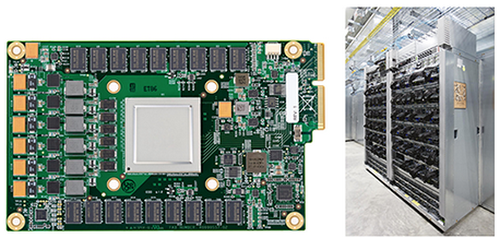

The landscape of computing hardware procurement is undergoing a fundamental transformation. Where tech giants once purchased discrete chips for specific functions, they now require comprehensive computing ecosystems that integrate GPUs, CPUs, and specialized hardware. This shift reflects the evolving demands of modern AI applications, particularly as companies move beyond training massive models to deploying agentic AI systems that require efficient, low-latency computing across diverse workloads.

The Meta-Nvidia Deal: A Case Study in Ecosystem Purchasing

Nvidia's recent multi-year agreement with Meta represents a significant departure from traditional chip purchasing patterns. According to WIRED's analysis, this deal includes not only millions of Nvidia Blackwell and Rubin GPUs but also features "a large-scale deployment" of Nvidia's Grace CPUs as stand-alone components. Meta becomes the first major tech company to announce substantial purchases of Nvidia's CPUs as independent chips, signaling a move toward more balanced computing architectures.

This partnership expansion builds on Meta's existing infrastructure plans. The company previously estimated it would purchase 350,000 H100 chips from Nvidia by the end of 2024, with plans to access 1.3 million GPUs total by the end of 2025. The new agreement specifically mentions building "hyperscale data centers optimized for both training and inference," indicating a holistic approach to AI infrastructure that requires diverse computing components working in concert.

The Rise of Agentic AI and CPU Demand

The growing importance of CPUs in AI infrastructure reflects the emergence of agentic AI systems that place new demands on general-purpose computing architectures. As Ben Bajarin, CEO of Creative Strategies, explains in the WIRED report, "The reason why the industry is so bullish on CPUs within data centers right now is agentic AI, which puts new demands on general-purpose CPU architectures."

This shift represents a fundamental change in how AI companies approach computing power. While GPUs remain essential for training large models, CPUs are increasingly critical for managing data flows, preprocessing, and supporting inference workloads that don't require the parallel processing capabilities of GPUs. The industry is recognizing that efficient AI deployment requires balanced architectures where different types of processors handle specialized tasks.

Nvidia's Strategic Pivot to Complete Solutions

Nvidia's recent business moves demonstrate a deliberate strategy to position itself as a provider of complete computing ecosystems rather than just GPU hardware. The company's $20 billion investment to license technology from chip startup Groq represents its largest investment to date and focuses specifically on "expanding access to high-performance, low cost inference" according to WIRED's reporting.

This strategic shift reflects Nvidia's recognition that AI companies now need soup-to-nuts computing solutions. As one analyst describes it in the WIRED article, Nvidia is emphasizing "technology that connects various chips" as part of a comprehensive approach to compute power. The company has been positioning its hardware for inference computing needs for years, with CEO Jensen Huang estimating two years ago that Nvidia's business was likely "40 percent inference, 60 percent training."

Industry-Wide Diversification of Computing Sources

The trend toward complete computing ecosystems is occurring alongside broader industry efforts to diversify hardware sources. Major AI labs and technology companies are increasingly developing or customizing their own chips, creating competitive pressure on traditional hardware providers. According to WIRED's analysis, companies like Microsoft rely on mixtures of Nvidia GPUs and custom-designed chips, while Google primarily uses its own Tensor Processing Units alongside Nvidia hardware.

This diversification reflects both changing technical requirements and supply chain considerations. As Bajarin notes in the WIRED report, "The AI labs are looking to diversify because the needs are changing, yes, but it's still mostly that they just can't access enough GPUs. They're going to look wherever they can get the chips." The competition for computing power has led companies to explore multiple hardware pathways, from developing proprietary solutions to forming strategic partnerships with emerging chip manufacturers.

Implications for AI Infrastructure and Development

The shift toward complete computing ecosystems has significant implications for how AI companies design and scale their infrastructure. Companies must now consider not just raw computing power but how different hardware components interact within complex systems. As noted in the WIRED article, CPU usage is accelerating to support AI training and inference, with examples like Microsoft's data centers for OpenAI requiring "tens of thousands of CPUs" to process and manage data generated by GPUs.

This evolution requires new approaches to data center design, software optimization, and hardware procurement. Companies must balance the need for specialized hardware with the practical realities of supply chain constraints and cost considerations. The move toward more integrated computing solutions represents both a technical necessity and a strategic response to the increasingly complex demands of modern AI applications.

Conclusion: The New Era of Computing Procurement

The days of purchasing discrete chips for specific AI functions are ending, replaced by a new paradigm where companies seek complete computing ecosystems that can handle diverse workloads efficiently. This transformation, exemplified by deals like Nvidia's partnership with Meta, reflects the maturation of AI applications beyond training to include sophisticated inference and agentic systems. As AI continues to evolve, companies will increasingly require balanced hardware architectures that integrate GPUs, CPUs, and specialized components into cohesive computing solutions. This shift represents not just a change in purchasing patterns but a fundamental rethinking of how computing power supports the next generation of artificial intelligence applications.